VGA framegrabbing with TVP7002

Okay, maybe it's finally time to write something about this project I've been working on for quite a while. It has finally reached a stage where it's quite cool!

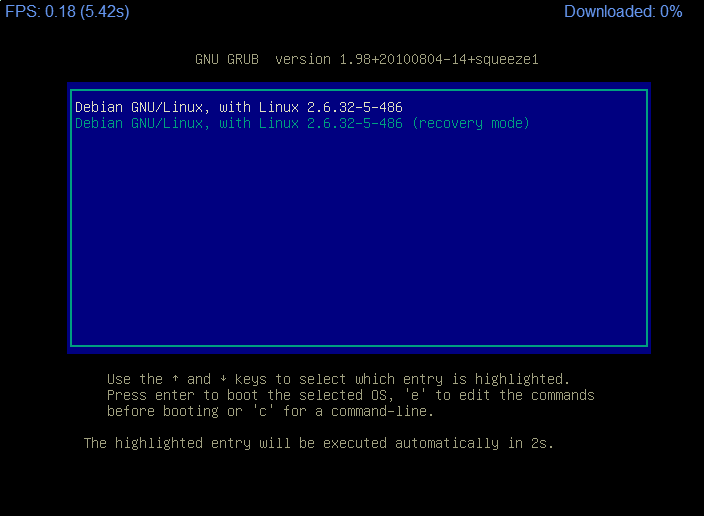

The story is that for some strange reason, I'm showing great interest in implementing a network based KVM for managing my home server remotely. To do this, I need to be able to capture VGA output from a PC, and transfer it over some medium (ethernet) to another PC.

What I want to do, is basically this:

I've had the frame capturing part of the project working for a while now, and just recently, I've managed to get ethernet working.

Currently I've managed to do this using the following devices:

- TI TVP7002 video digitizing chip

- NXP LPC1768 32-bit ARM Cortex-M3 microcontroller from NXP

- Altera MaxII EPM240 CPLD

- ISSI IS61LV5128AL 512Kx8 SRAM

- Microchip enc28j60 ethernet controller

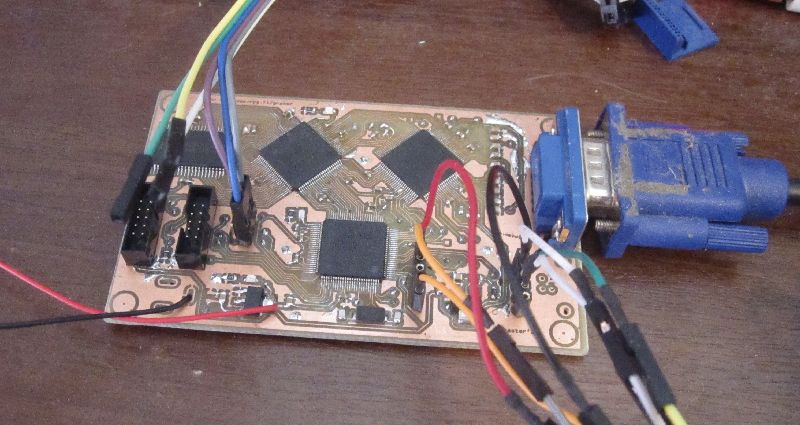

The board is ugly, full of kludges and completely temporary.

The board is ugly, full of kludges and completely temporary.

An online demo of the web interface can be seen here. If the image is not changing, that probably means my board is off-line, and only a cached image can be seen.

I'll write a little bit of details below. If this gains any attention, I would be happy to write in more detail about various aspects of the project.

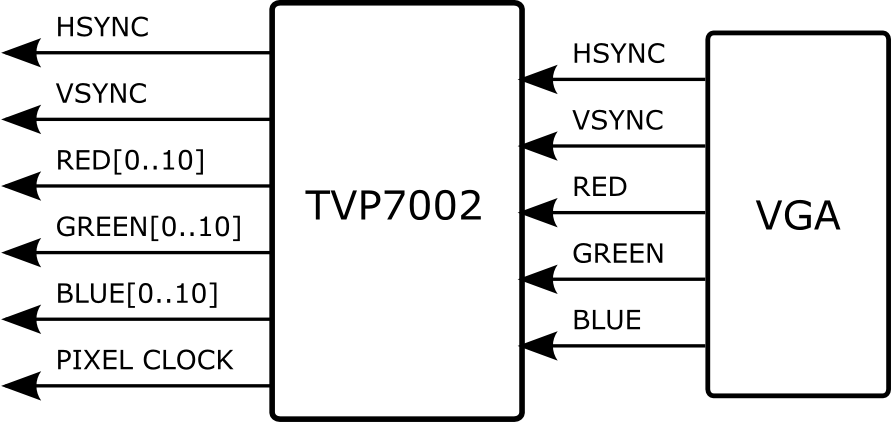

TVP7002

The project got a nice boost when I found TVP7002 on TI's site. It's a video digitizing chip, featuring 3x ADC (one for each color channel) and a PLL system for generating the pixel clock from the HSYNC input. Compared to alternatives, this seemed to be quite nicely priced, and well available. In fact, TI was kind enough to provide me with free samples!

Without this chip, or a chip like this, I would have no hope in implementing a project like this. Since I don't really know much about electronics, it's very helpful to be able to hide all the scary and magical stuff away.

Here's a simplification of what the chip does in my project:

So instead of having to deal with the analog signals myself, or having to generate an appropriate pixel clock, the TVP7002 does this for me. After setting up the chip appropriately, I'm left with easy to understand digital signals.

To read this image data, I basically need to keep up with the sync signals to know when a frame or a line starts. On each pixel clock, I can read the color channels for pixel color data. This is already well familiar to me from my experiments with the OV7670 camera (see my blog entries here and here).

FPGA / CPLD

The speed at which I must read the pixel data is pretty high – 25.175MHz for standard VGA resolution. It's not really easy to use a standard microcontroller for this, so an FPGA is a better choice. In theory, the data could be clocked directly onto some FIFO RAM, like done on the ov7670 module (see here). However, I did not really bother looking for suitable RAM like that, and decided to process the signals myself instead.

Some captured data when things were not that perfect yet

Some captured data when things were not that perfect yet

At first, I set out to read one pixel at a precise location. This would be enough to prove that the setup can work. This was successful, and I was able to draw a full frame by reading one pixel at a time – each pixel from a different frame. Transferring the image to a PC took a very very long time, depending on how I packed the data. At this point, I was not using any external RAM yet.

Later, adding SRAM to the project was relatively easy, using this simple Verilog SRAM controller as a base. The SRAM access times are determined by the CPLD clock - which is also the pixel clock - normally 25.175MHz for standard VGA resolution. Read operations also use negative edges of the clock.

Originally, I used an Altera Cyclone II with a cheap development board from eBay, but since the required logic is very simple, I later switched to a CPLD. The transition was pretty easy, but the logic had to be simplified by replacing SPI with simple IO signals.

Microcontroller – LPC1768

The choice of microcontroller is not really a very critical part of this project. When a video frame is captured to SRAM using the CPLD and TVP7002, transferring a full, beautiful image to the PC can be done at any speed, using any desired protocol. That said, I will not be making an Arduino shield of my project!

Currently I'm clocking the data from the SRAM to the microcontroller at around 400kHz. I could go faster (if the MCU can keep up), or slower (if the MCU can't keep up). Although I've successfully utilized DMA for this, it didn't seem to be worth the effort at the time, and right now I'm using simple GPIO routines instead. There's definitely room for optimization here, which I will look into later for improving the frame rate.

The choice of microcontroller may be important for some other things though; I wish to use ethernet for the data transmission – and LPC1768 has Ethernet MAC built-in. However, currently I'm using enc28j60, which could be used on any microcontroller.

In future this project would benefit from having USB support for implementing an HID keyboard, so if LPC1768/69 turns out to be overkill, I could perhaps use something like LPC11U14 instead. For now I'll stick with the LPC1768.

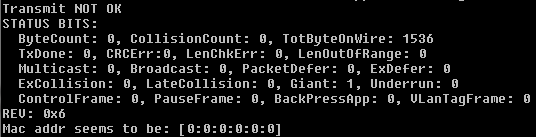

ENC28J60

Although LPC1768 has a built-in Ethernet MAC, I decided to start my Ethernet experiments with Microchip ENC28J60 instead. This was simply because ENC28J60 seemed more approachable with many working examples available for inspection.

I started by porting over this stellaris-enc28j60-booster project, which uses the uIP stack. This worked fine, but The download rates I got with uIP were pretty slow. That was to be expected, since uIP is not really designed for high throughput.

Using the same ENC28J60 driver as a base, I switched the stack to lwIP. Porting the stack was kind of straightforward, as described by this document, but certainly not easy for me. Later, after I had gotten the lwIP stack working, I realized the same Stellaris ENC28J60 project also had a lwIP branch here, but it was too late.

With this stack, my top transfer speeds are around 300KB/s, which is pretty nice. Due to slowdowns from the image transfer, I'm currently transferring stuff at around 100KB/s.

I think the lwIP stack is probably working perfectly, but the ENC28J60 is still giving me a headache. At the moment, it could be running 24 hours without a problem - and then suddenly stop working for no good reason. After multiple resets and ritual sacrifices, it will resume normal operation again. It could be some problem with the breakout board I'm using, and I'll try to not get too anxious over it for now.

The future, and stuff

This writeup is rather short and without much detail, but each part of this project has been a huge effort. Later I do hope to write in more detail about some things.

When I started working on this, I knew every little about electronics. Now that I've gotten this far, I still know very little about electronics, but a tiny bit more about digital electronics. My background as a code monkey has certainly helped a lot in understanding this stuff.

Right now, there's plenty of stuff to tweak and improve in the current design. At the moment, things are hardcoded in, and the board just assumes the input is always 640x480@60. There's a lot I can do regarding that by just writing more code.

However, it's also time to start planning some hardware improvements. First thing in mind is a new board with the design and manufacturing errors fixed. Another thing is probably to start looking into the LPC1768 built-in MAC and the LAN8720 PHY.

I don't know what I will do about with memory yet, but obviously more is needed if I want to support bigger resolutions. Faster pixel clock rates will also require some consideration.

And USB support.. some day...

Code

I've dumped the microcontroller and Verilog code on github here. I don't intend this code to be used by anyone as-is, but maybe some bits of it could be useful to someone.

Schematic is also available here: grabor v0.1 schematic

Links

Here are some links I found useful during this project:

- Example code for the Stellaris enc28j60 booster pack

- Another enc28j60 example on AVR

- lwIP implementation and RawTCP

- FPGA tutorials at fpga4fun.com

- The Video Converters forum at ti.com

- Freddie Chopin's helpful project templates

- svofski's brain

- MBED-NXP-LPC1768-LWIP

Comments !